Waymo, the Google-owned self-driving company that uses Jaguar I-Pace electric cars to shuttle people around cities without drivers, is slowly becoming a household name. While Tesla is only just now launching its self-driving car service, Waymo has forged ahead, becoming the leader in the space.

These I-Paces have been operating without drivers for years now, but they haven’t been perfect. As it turns out, developing software that can replicate a human driver isn’t exactly easy, which has resulted in numerous issues for people using the service and the people driving nearby, some more lighthearted than others.

The city of San Francisco discovered yet another shortcoming for Waymo’s cars over the weekend when parts of the city’s power grid went down on Saturday night, leaving roughly 130,000 customers without power. City streets were left without overhead lighting or working traffic signals. Waymo cars deployed on the street ended up stopped in place and unable to proceed, blocking traffic and generating gridlock situations.

The Timeline of Events

Posts on social media showed Waymo’s autonomous vehicles stopped on city streets with their hazard lights flashing on Saturday night, blocking drivers and causing jams.

Waymo itself acknowledged the issue, telling The Verge it suspended services in the San Francisco area to focus on “keeping our riders safe and ensuring emergency personnel have the clear access they need to do their work.”

Pacific Gas & Electric, the utility company responsible for the affected area, announced on Sunday, 2 p.m. Pacific time, it had restored power to 114,000 customers after a substation fire. That evening, Waymo sent another statement to The Verge confirming it had resumed operations in the city:

We are resuming ride-hailing service in the San Francisco Bay Area. Yesterday’s power outage was a widespread event that caused gridlock across San Francisco, with non-functioning traffic signals and transit disruptions. While the failure of the utility infrastructure was significant, we are committed to ensuring our technology adjusts to traffic flow during such events.

“Throughout the outage, we closely coordinated with San Francisco city officials. We are focused on rapidly integrating the lessons learned from this event, and are committed to earning and maintaining the trust of the communities we serve every day.

What Exactly Happened Here?

Waymo has yet to reveal why its cars froze up as soon as the going got tough, though that won’t stop me from speculating. Waymo cars don’t strictly rely on data networks to function, since they predominantly use pre-mapped data and their suite of onboard cameras, radar, and LIDAR sensors to make decisions.

???????? SAN FRAN BLACKOUT – WAYMO FROZE, TESLA DROVE

Waymo’s robotaxis got a little too real last night – by completely shutting down when San Francisco’s power outage knocked out traffic lights.

Meanwhile, Teslas on FSD? Kept rolling. No drama, no headlines – just handling chaos… pic.twitter.com/8FinYpAOcB

— Mario Nawfal (@MarioNawfal) December 21, 2025

Nonetheless, the prevailing theory right now is that strained cellular networks are what caused the Waymos to brick themselves. Waymo shared in a blog post last year that when Waymo vehicles encounter unique situations, they come to a stop and reach out to a “human fleet response agent” for help to navigate. These agents can view real-time feeds from the cars and rewind to see how the car got there in the first place.

This communication requires an internet connection with serious bandwidth, and because many people were likely using cellular data instead of Wi-Fi at the time—due to the power outage—the networks were likely strained enough to slow communication between the Waymos and the fleet response agents. The lack of streetlights and traffic signals probably caused several unique situations, too, which couldn’t have helped matters.

Really, it’s not all that surprising this happened; Waymo relies on network connections to those cars, and those servers and routers and other equipment use electricity. It’s likely the most plausible solution if this happens again is to provide some sort of power backup to crucial systems.

Though Waymo has made huge strides in recent years with its self-driving tech, this situation is proof that engineers have yet to conquer every distinct scenario a vehicle might come across. Driving a car might feel simple because you do it every day, but the reality is far different. There’s a lot of subconscious brainpower and decision-making going on when we drive, even if it feels just as easy as walking.

Tesla Robotaxis were unaffected by the SF power outage https://t.co/uaYlhcSx25

— Elon Musk (@elonmusk) December 21, 2025

Tesla CEO Elon Musk, ever the one to capitalize on a bit of publicity, posted a statement to his social media company X on Sunday morning, saying that Tesla’s fleet of Robotaxis was unaffected by the power outages. That’s not totally surprising, since these cars still have real, actual humans behind the wheel as supervisors. So while Elon might say the cars were unaffected, it’s possible the supervisors were just there to immediately fix anything that went wrong while the streetlights and traffic lights were out.

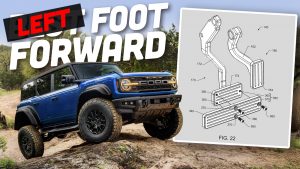

Top graphic image: Kevin Chen on YouTube

Self-driving cars are a fundamentally terrible idea that shouldn’t exist.

Driving is a social, communication-heavy activity that relies on an understanding of human behavior and society (drivers, pedestrians, the context surrounding institutions near roads like various retail establishments, etc. and so on). If you want a form of transportation based on fixed routes and limited judgement/decision-making, you want trains.

At best, you’d be making “virtual railroads” over general road infrastructure, and any crosswalk in front of a self-driving car is only safe in comparison to a level crossing (if trains could quickly and economically brake to a stop at level crossings, they would be safer).

This sort of failure to recognize/value the human context of technology is why Silly-Con Valley technocrat bros’ vision of the future is dystopian, miserable, and again, a fundamentally terrible idea.

If they can’t function without a network connection, they aren’t self-driving. This whole “self-driving” thing is such a crock. “Safer” than humans, but can’t function in so many edge cases that it’s impossible to say it’s safer. I don’t know of any human drivers that don’t know that if the power goes out and traffic lights are out your response is supposed to be to treat them like 4 way stops.

Waymos are programmed to treat a traffic light without power as a 4 way stop. They are also programmed only to enter an intersection if they can fully cross and clear the intersection.

They will not edge out and force their way into a line of human drivers that are not following the law and blocking the intersection.

Back in the mid 80s, as a TV news photographer, I went Dumptster Diving with a homeless couple. And I had a thought that some homeless people are going to do better than me and others/probably most of us in an economic meltdown. They had already figured out how to get by with nearly nothing. I actually admired them.

The Russians have allegedly developed a system that will take out all the Starlink and US GPS satellites. If the rest of the internet collapses, and the GPS constellation gets messed up, a lot of people are going to really be hurt.

It’s scary how quickly so much stuff could get messed up (to put it mildly) so seriously.

I’m heading down to see my brain-muddled mother just after Christmas. I know this route so well that I don’t need an app to do that. But I do need gas pumps to work. And credit card readers to work.

I can understand confusion of Waymo computers. They were never trained on how San Francisco looks when pitch black, as there was no way to gather that data in a city that never sleeps. Falling into “I don’t recognize where I am” mode and freezing up is not ideal but at least explainable.

I remember driving home from work one day and found I had entered into a complete blackout. No traffic signals, no sidewalk illumination, no illuminated signs from local businesses. All black except for what’s illuminated by my headlights. On a route that I’ve taken thousands of times, day and night, I didn’t recognize the turnoff to my own home.

I was stuck in that traffic shitshow and from what I saw, while Waymos may have been a problem at some intersections, it was humans who were the worst…

In (very slowly) moving traffic, Waymos were fine from what I saw – same as other cars. I didn’t see either of those two specific intersections in the videos.

But people? People were aggressive angry dipshits, pushing their way into intersections and then blocking them, barely missing pedestrians (some of whom were also idiots, it should be said) and honking all the fucking time.

After taking 40 minutes to go 1 mile (seriously!) I have up and found a side street and chilled out for an hour until I could go back home.

Why is the failure mode “stop and block intersection” instead of “pull over to the curb”?

they just need to put some nerf bars on them thangs

These taxis are Waymo afraid of the dark than they should be.

I was reading elsewhere that human drivers may have contributed to confused waymos. They’re apparently programmed they way you’d want – traffic lights being out is a 4-way stop – but if none of the humans know what to do in intersections when the traffic lights are out, and behave erratically, then the waymos effectively stopped. (As a side note, I’d bet the reason tesla continued and waymo didn’t was because tesla doesn’t have anything resembling a failsafe)

There are a couple things I can think of that are probably needed… they understood what to do when a traffic light was out, but need additional programming to know what to do when that intersection is also full of humans that don’t know, or are too impatient and want to go now. And failing that, the cars really need a steer and clear mode. If they get really confused, and a human can’t intervene, the cars really need to clear themselves off the road.

I read the same. Waymos are programmed to follow the law. They treat traffic lights that are out as 4 way stop AND will only proceed if the intersection is fully clear and they can fully clear the intersection. The Waymos were faced with humans continuously blocking intersections. They wont do what a human would do in the case of an intersection that never clears and slow creep out a force their way through.

“That’s not totally surprising, since these cars still have real, actual humans behind the wheel as *supervisors*”

FIFY. Or this:

That’s not totally surprising, since these cars still have real, actual humans behind the wheel as backup.

Hypothetical situation: Magnitude 8.8+ earthquake.

Are these now potentially blocking routes emergency vehicles need to take?

Yes, same as the far more numerous cars abandoned by their human drivers.

Eh. After the 1981 Loma Prieta earthquake, some cars were abandoned on the Bay Bridge because the bridge was impassible. However, traffic was slowly moving at the eastern approach to the bridge.

The fatality on the bridge was caused because Caltrans misdirected traffic and a car off the edge at the broken section.

The weird nerds will tell you “edge case! edge case! edge case!”

“99.99999999% of the time earthquakes don’t happen! It would be wasteful to plan for what to do should they happen!”

“Waymo cars deployed on the street ended up stopped in place and unable to proceed, blocking traffic and generating gridlock situations.”

That’s a lot more forgivable than the gridlock I’ve been stuck in because multiple human drivers involved in a minor, non injury accident couldn’t follow the simple instruction to move to the to the shoulder.

Even if they do though sometimes gridlock caused by human drivers gawking as they pass by.

I think this helps illustrates my feeling pretty well: how is this any better? Like, okay, maybe it’s not worse (though I think it is), but if it isn’t better, what are doing here?

I disagree. The Waymo cars were almost certainly blocking traffic for the duration of the outage as opposed to looky-loos who eventually get out of the way for emergency vehicles.

Perhaps. If that is what happened Waymo has now identified that as a problem and they can take steps to fix it and apply that update across their fleet.

Meanwhile human drivers will continue failing to yield for *REASONS!*.

I had a discussion with a friend the other day about AI and self driving cars. He was more optimistic than myself but we both agreed on one thing, edge cases. The programmers don’t know what they don’t know and it’s nearly impossible to account for all edge cases. There is an innumerable amount of ‘what if’s’ waiting to trip even the best software based driving systems.

Waymo does not know de way

Anyone could see the road that they drove on was bathed in dark, now it’s almost Christmas and they just can’t self-park. They’ll never get better, they’re happier bricked today.

Where were they going without ever knowing de way?

Add it to Now thats what I call Music, Autopian edition!

…and I’m ear-wormed. Thanks, mate.

Peter Frampton also does not know the way. He would like to be shown.

Dionne Warwick has entered the chat

It seems only Sinatra may have gotten it.

JATCO’s favorite singer, Frank Sentra.

I was just in LA last week where Waymos are prevalent. Though I didn’t get to ride in one, I was impressed at their ability to assertively navigate challenging traffic situations, stay in their lane and not do dumb stuff.

If I’m being honest, they seemed to be the best drivers on the road and I’m sure the network will only improve as they work through challenges like this.

Waymos provide several services to other drivers.

I ride my bike around Waymos in Downtown Los Angeles all the time and they give me more space than human drivers do. They do stink for a few days after they burn to the ground if you are wondering.

My daughter’s college is in downtown Atlanta (GA State University) and there’s tons of waymos there. She vastly prefers them to the other rideshares, as well as general human driver population, because on crowded streets, they’re the only ones consistently watching for pedestrians

I’m in the Bay and absolutely welcome self driving cars becoming more prevalent. Waymos are way better drivers than basically any human these days. I’ve had Uber rides with some truly terrible drivers. If I’m safer, everyone around me is safer, and I pay less for a ride, that’s a win-win-win in my books.

Obviously, being an Autopian member, you can pry my fun car out of my cold dead hands, but I don’t think that self-driving cars will spell the end of human-driven cars in general anytime soon, if ever.

My wife and I recently had a chance to try Waymo service in Phoenix. I was VERY impressed – especially compared to the Uber and Lyft drivers we took to and from the airport.

If Waymo is an option in a city I’ll be using that option

Why not do some actual reporting and find out if the Tesla’s needed human input to keep functioning, rather than insinuating that they did without any evidence?

Well, they DO always need human input to keep functioning since it’s a level 2 driving assistance system, not “full self driving.”

I’m guessing you haven’t tried FSD recently.

Technically no one has, because there is no such thing as “Full Self Driving.”

Because Tesla is the only one with that information? It isn’t gonna be published anywhere unless there was an accident where they were forced to turn over the data.

Assuming Musk is full of shit is always a safe bet

It’s like how shrapnel from the Navy shooting rockets across I5 last summer hit the VP’s motorcade. We only know about it because a CHP cruiser was damaged and the state of California isn’t playing along. It could’ve landed square on a Federal vehicle, even Captain Couch’s own limo, and been kept secret.

Find out from whom exactly? Tesla rather famously canned it’s PR dept and rarely talks to journalists about anything.

You still need to reach out. A real journalist, of which few remain, don’t publish wild guesses they make without even attempting to confirm.

Musk is never going to love you. Stop the fanboising.

Pathetic.

Yes, yes you are.

I was there when this was happening, it was nuts! Traffic coming off 101 at Octavia was slow because the traffic lights were out. Going up Fell was really wild because traffic was so light because many of the side streets were blocked by lines of stalled Waymos. At one point, two of the three lanes of Fell St, were blocked by stalled Waymos. We called a friend and they said that their car was momentarily trapped between two stalled Waymos, though I’m not sure how that would have happened.

This all seemed super unsafe in that it was blocking many streets and would have blocked the path of emergency vehicles.

That’s the kind of thing fire truck and ambulance drivers secretly LIVE for 🙂

Operation “Mo-Plow” is a go!

“…they said that their car was momentarily trapped between two stalled Waymos, though I’m not sure how that would have happened.”

It’s really easy.

You’re in a string of cars at a light or stop sign – A Waymo is ahead of you and another behind you. Because you’re not a cautious driver, you’re right on the back bumper of the car ahead of you.

Then the power goes out. And even tho the Waymo left some reasonable space behind you, you didn’t leave yourself a way out ahead, so you’re stuck until oncoming traffic clears and you can pull out of the gap as if you were in a tight parallel parking space, with a lot of back and forth-ing.

At least PG&E didn’t develop the robotaxis or the failsafe action would be “Accelerate quickly into darkness, car will eventually stop itself.”

All things considered, could have been worse. Still really stupid and embarassing, but maybe the AI is programmed to do exactly what hapless part-time drivers in SF would do, anyway.

10 PANIC

20 IF NO PANIC GOTO 10

15 SET CPU USAGE 100%

So how many years before we have an article about how adding bedazzled accessory rings to your self-driving car can accidentally lead to it crashing into school buses?

FFS, traffic lights go down all the time for various reasons like maintenance, power outage, equipment failure, etc.

The fact that there wasn’t programming in place to deal with this situation is inexcusable.

My guess is the software engineers are just weird nerds who either can’t, or simply refuse to understand the concept of “shit happens”

I wonder if the infrastruture subtly influenced the expectations here.

To put it another way, there’s yet another reason why they didn’t build these for long-haul suburban or rural situations or this probably would have been an immediate consideration.

In their defense, every time we have a power outage and one set of lights goes to flashing red, the other to flashing yellow, everyone seems completely confused. I don’t know who to expect LESS from — humans or clankers…

Beyond all the self-driving hype, has anyone actually done a rigorous analysis if all this technology is cost effective vs a human minion driving a cab?

Admittedly 40 year old data but at GM in the 80s we used to joke that it took two EDS software engineers to replace one UAW worker with one robot.

That’s what I’ve been thinking – the tech industry will happily spend a trillion dollars so they don’t have to pay a cabbie $55k a year

Once we spend the trillions of dollars on AI/robots/self-driving cabs the AI will be able to figure out how to make it cost effective. So doing a pathetic “human-brainpower” analysis at this point is a waste of time and an obstacle to progress.

/s, just in case, but god it’s depressing that someone could believably be out here preaching the gospel of Big Tech like this.

At that point, the AI will determine humans will be cheaper for the next 468 years.

The main benefit for using a robot over a human is consistency, not having to worry about the human was a side benefit.

So these things brick themselves and block the road. And if someone dies because an emergency vehicle can’t get through? As I said in the comments to another article, I did not sign up to be a guinea pig and risk my life so these companies could gather data. These things do not belong on public roads until they can show a much higher level of ability.

I wonder if they continue to park at a curb or just abandon in place at the time of outage?

Haha. I can only assume you are being sarcastic.

That having been written, if someone could find a way to find hundreds of parking spaces in SF at a moments notice, then that would be worth a lot of money. Perhaps more money than “self driving” cars.

Years ago, I went to visit a friend in SF and ended up looking for parking for 45 minutes, it would have been quicker to take public transportation from across the bay.

I lived in SF for 20 years, and your 45 mins for parking is typical.

Which is why I eventually got rid of the car and lived with no car at all for 14 of those 2 years.

Because a City is no place to own a car.

Because a City is no place to own a car.

The most important lesson in all of this.

I’ve seen PLENTY of human drivers that refused to yield to emergency vehicles, including people driving at speed in the left lane with lots of room to move over.

What was the penalty? Nothing. The emergency vehicle had no time and had to pass on the right or even cross over the median to use the oncoming lane.

So that makes this OK? Can’t we have higher standards for these things, since they claim to be better? It’s not like this isn’t a situation you can’t foresee. What if SF has an earthquake and the power is out for days? How do you get these vehicles out of the way?

This IS the way to a higher standard!

Human driving is as good as it’s going to get yet humans still disregard rules when it suits them, often resulting in great harm to others. Nobody needs to rubber neck yet they do even though it messes up traffic behind. Drivers are specifically instructed to move minor noninjury accidents to the shoulder but every day there are two or more idiots who stay right in the middle of the freeway, needlessly dragging in everyone else into their drama.

Nobody needs to speed yet lots of people do because they find it thrilling and fun leaving safety as the responsibility to others. Humans run red lights ignore signs, drive the wrong way, get lost, cross three lanes of traffic, tailgate to intimidate, lose interest, back up on the freeway to make an exit, and lots of other stupid things.

Human driving misbehavior is so normal people don’t even remember the woman who was dragged 20′ by a Waymo was knocked into that Waymo by a meat bag in an Altima. That meat bag knew exactly what they did and deliberately fled the scene while the Waymo pulled over in as short a distance as was physically possible. Had that Waymo been a human driver doing exactly the same responsible behavior there would have been at most surprise and relief, yet since it was a robot the reaction was condemnation for not having blocked traffic because *something* happened.

Are AI perfect? No. Are they better than humans? Probably and while humans aren’t going to get any better AI will.

“What if SF has an earthquake and the power is out for days? How do you get these vehicles out of the way?”

Every disaster movie ever shows exactly the same thing, roads full of cars abandoned by humans. So I’m pretty sure there’s plan for that, probably involving tanks and bulldozers.

OTOH AI CAN’T abandon the car. It IS the car. So it can keep trying to move out of the way long after its humans have fled.

There seem to be a lot of situations that they could be training their systems on *before* they get on the road. Power outage/loss of comms seems like it should have been pretty high on the list. I am not convinced that they’ve gotten these systems smart enough to be practicing on the roads. We’ve seen where they block the road, and there’s no one for the cop to yell at to move. If that’s a human, they’re made to move (or dragged out and the car gets moved).

Using the fact that some humans suck at driving isn’t an excuse for these vehicles. When the power went out, what percentage of the human drivers just abandoned their vehicles in the middle of the street? 100% of the Waymos did. They didn’t try to move out of the way, they just stopped.

One of the problems with these vehicles isn’t just bad driving. Humans do that too as you note. The problem is weird, unexpected driving. You might expect humans to drive too fast, or too slowly, but you don’t expect them to stop dead just because there’s a tiny obstruction that’s easily driven around. You can’t wake them up from being inattentive with a car horn. And you can’t make eye contact to make sure that the car knows you’re there. Apparently the cops can’t walk up and ask them to move over. These seem like problems that they are attempting to solve by being on public streets. Solve these on a test track first.

When humans abandoning their vehicles can’t even be trusted to do so properly so emergency responders can move them later:

“As the devastating Palisades Fire tore through parts of Southern California Tuesday, firefighters used a bulldozer to clear the roads and move abandoned vehicles left by evacuating residents.”

….

“Actor Steve Guttenberg told local media outlet KTLA5 that drivers left their cars on the road and fled the scenes after traffic jams hindered evacuation. Guttenberg said he requested several drivers to leave the keys in the car so volunteers or responding crews could move the vehicles around to clear the way for authorities and firefighters, but most did not pay heed.

“What’s happening is people take their keys with them as if they’re in a parking lot,” Guttenberg had said to KTLA5. “This is not a parking lot. We really need people to move their cars. If you leave your car behind, leave the key in there so a guy like me can move your car so that these fire trucks can get up there”

https://www.usatoday.com/story/news/nation/2025/01/09/palisade-fire-bulldozer-abandoned-cars-video/77576231007/

This one bring up an interesting scenario, humans abandoning their vehicles when they can’t see, as in a whiteout:

https://cdllife.com/2022/chp-says-greyhound-driver-caused-traffic-jam-by-abandoning-bus-on-i-80/

This is exactly one of the scenarios where AI is promised to outperform humans thanks to embedded roadway sensors, GPS and other navigational tools unavailable to humans.

So again how are humans doing any better?

I didn’t say humans were doing better. AI isn’t doing any better, as we just saw. AI is “promised” to do better? When? When that happens, put it on the road. But for now, AI is doing worse in some cases. We have instances of them stopping dead because there’s something slightly protruding into the street instead of driving around them. Or sitting behind vehicles that are not moving forever when they could drive around. Or in the case of Teslas, driving into clearly stationary items.

And you seem to have missed one important point – of the millions of drivers on the road, a small fraction drive poorly or oddly. In the case of AI vehicles, it’s 100% of them failing. What happens when there’s more of them on the road?

The reason there aren’t more of them on the road is to make sure problems like this are ironed out before wide scale deployment. This event was useful to highlight a shortcoming of the system but crucially nobody was hurt. Why? Because AI chose safety rather than risk driving blind. Humans have no problem driving blind even at extra freeway speeds.

“AI isn’t doing any better, as we just saw.”

Not true. Overall AI is already doing FAR better:

https://arstechnica.com/cars/2025/03/after-50-million-miles-waymos-crash-a-lot-less-than-human-drivers/

Again AI will only get better as events like power outages highlight problems and those problems are addressed. Humans OTOH will not improve.

If the tables were turned and it were humans asking permission to drive in a transport environment dominated by AI they’d be laughed out of the room.

Having lived in the city for 13 years, I can attest that *all* drivers seem to lose their brains when all the signals go out. The Waymo cars were just fitting in.

Seems like a fair number of drivers there never had brains. That place can be a real zoo to drive around in – which is why I believe Waymo picked a good place to develop their vehicles.

Waymo actually developed their vehicles and self-driving tech on the remains of the old Castle AFB just north of Merced.

SF is the first place they were rolled out to the public.

They have not continued their development in SF?

https://www.wired.com/story/google-waymo-self-driving-car-castle-testing/

https://waymo.com/blog/2020/09/the-waymo-drivers-training-regime

https://www.digitaltrends.com/cars/waymo-self-driving-car-structured-testing-continues-during-pandemic/

SF was the first place they rolled out in public with safety drivers for a number of years, which is how they continued development on actual city streets.