I envy people who can read lips. Being able to see what people are saying, without having to actually hear them, feels like a type of real-life superpower to me. Whenever I’m scrolling on TikTok and come across one of those videos of a lip-reader reciting something said by a celebrity at the Oscars or a professional athlete on the field, I can’t help but watch the whole clip.

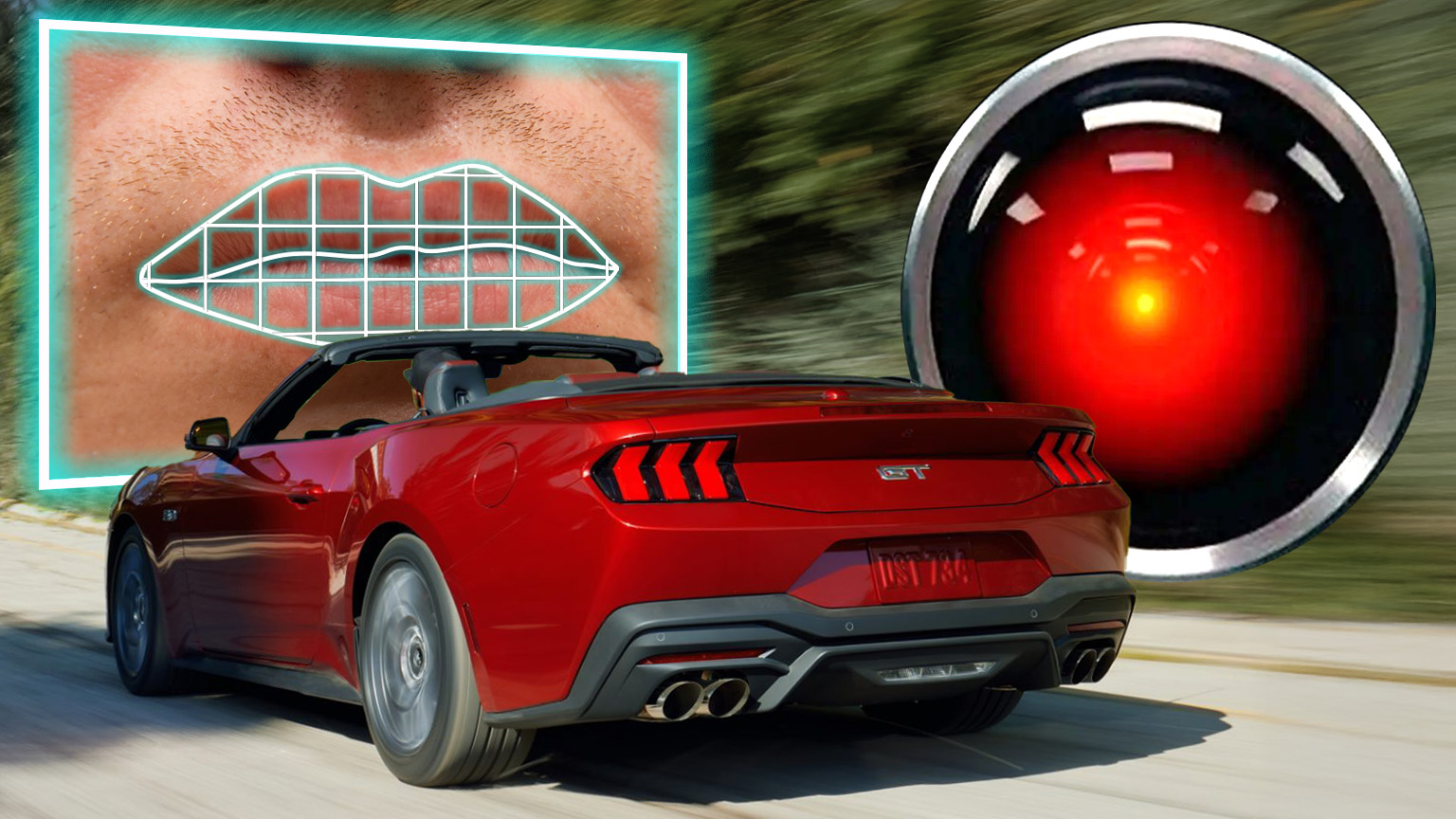

Soon, your new Ford might be doing the same thing. According to a patent application filed by the American automaker, it has come up with software that can use interior cameras to read the lips of occupants, in the event normal voice controls aren’t possible (say, like if you’re rolling top-down in a convertible and the wind is interfering with the microphone).

In addition to reading lips, the patent application proposes using the cameras to use facial expressions alone to issue commands. Considering how expressive I get behind the wheel sometimes for no reason at all, I’m not so sure about that one. Either way, it’s worth taking a closer look at how all of this works.

The software side of this patent application is actually pretty straightforward. Ford pitches this specifically for use in a convertible, where, when the top is down, the doors are removed, or the windows are open, the noise in the cabin might increase to the point where cabin microphones might no longer function properly, due to wind buffeting. From the patent:

The vehicle can determine using image data and/or other sensor data that the vehicle is in a convertible state. The vehicle further determines that an ambient noise level in the vehicle is higher than a threshold. The vehicle then switches the mode of operation in order to clearly receive audio input and interpret the audio input. The vehicle may enable a lip reading mode and/or a gesture and facial expression detection mode in order to determine words spoken by a user of the vehicle.

Here’s a flow chart of how the software determines whether there’s enough noise in the cabin to switch to what Ford describes as “enhanced mode:”

In this case, “enhanced mode” represents the mode within the infotainment that uses cameras to start reading lips and facial expressions. In another flow chart, you can see how engineers break down those two functions into two separate modes:

According to the patent application, switching to enhanced mode wouldn’t be totally automatic. In this setup, the driver and/or passenger would be prompted by the infotainment screen to select which modes they’d like to enable—one, both, or neither. Here’s a drawing from the application that shows what that might look like, embedded into Ford’s classic SYNC infotainment layout:

How The Lip Reading And Facial Expression Analysis Works

Ford says it can use cameras to read the lips of the occupants, passing the images into AI that’s been trained on lip reading to convert the lip movements into written words, which the computer can then translate into a command, without ever having actually heard what the person said.

The lip reading mode, if enabled, allows the vehicle to determine what the user is speaking even if the speech is not audible or is partially audible. The one or more cameras of the vehicle can capture the movements of the user’s lips. Other sensors may capture the gesture data and/or facial expressions as the user is speaking. The captured video and sensor data are processed using machine learning algorithms that are trained on large datasets of lip movements and corresponding speech to learn the patterns and nuances of lip reading.

It’s not just cameras that this system might use. The car could also utilize a radar-based sensor to determine lip movements, which sounds pretty neat:

The vehicle may also use acoustic signals to detect facial movements. For example, the vehicle may emit inaudible sound waves and analyze the echoes that bounce back from the user’s lip and mouth. The machine learning algorithms can then interpret the lip movements and translate them into text or spoken words.

The facial expression mode would use the same suite of sensors, but instead of just reading lips, it would track your entire face and head to determine movements and translate those into commands or responses.

As Ford explains it, the facial expression software would be used to determine “whether the user is experiencing difficulty in interacting with the communication system.” From the application:

For example, humans may perform certain gestures such as nodding of the head, shaking of the head, rolling the eyes, etc. in response to verbal communications. In the instance where the user is nodding his/her head, the vehicle may determine that the user is acknowledging a verbal conversation or an audio output via the speakers of the vehicle. The vehicle may then conclude that the current level of audio volume and operating conditions for the communication systems are adequate based on the user gesture and/or facial expressions.

On the other hand, if the user shakes his head or appears confused based on his facial expressions, the vehicle may determine that the user is having an issue with the communication system and may take certain actions such as increasing the audio volume or slowing down the pace of the audio output.

Depending on how you feel about the equivalent of an all-seeing eye constantly watching your every move while you drive, this seems like a pretty neat and useful feature for a convertible like a Mustang or a Bronco with its doors removed. Normally, voice controls can be rendered useless by the wind in these cars, but with a system like this in place, you wouldn’t even have to raise your voice to get a command across.

There are some obvious privacy concerns here—the patent even mentions that the lip-reading and facial detection modes can function off of a cloud-connected server. That means your face and whatever you say might be stored at a data center somewhere and used to train algorithms without you ever knowing. But it’s not like in-cabin cameras and face sensors are a pie-in-the-sky concept—they already exist in some production cars. Subaru’s DriverFocus system, introduced in 2019, for example, uses an infrared camera to monitor driver fatigue and alert them if it detects the driver is distracted or starting to fall asleep.

If anything, this is simply a new idea for how to better utilize those sensors to make life easier for occupants. I’ll give props to Ford for that.

Top graphic images: Ford; DepositPhotos.com; Warner Bros.

Will it be able to find the nearest Jack in the Box while I’m cranking Metallica?

If Ford or any manufacturer wants to sell to what I assume is a growing number of people that say Hell No to privacy invasion, it is easy for any of them to go in the other direction. Offer the reliability we had 15 years ago, with stand alone modules for ECU and whatnot. And their recalls would plummet as sales increase. Give us a 5.0 in a low Cd body that gets 50mpg. hwy. and I’m buying.

How do we get our privacy back? No one asked for this.

You stole my avatar. 🙂

So now I have to not only remove the modem and sim card from any new car I buy, but also several interior cameras.

Fuck the future. Fuck every fucking corporation that’s constantly abusing everyone in the world. Guillotines keep looking better every day.

Nevermind the privacy issues, cloud based means it shits the bed every time there isn’t 5 bars of cell signal. Which is ya know, all the goddamn time in places cars are driven.

Toyota has a stupid cloud based system so it can recognize more than the 5 commands the onboard 2005 era processor can handle, and it constantly says “network busy” and other stupid errors.

“I envy people who can read lips. Being able to see what people are saying, without having to actually hear them, feels like a type of real-life superpower to me. Whenever I’m scrolling on TikTok and come across one of those videos of a lip-reader reciting something said by a celebrity at the Oscars or a professional athlete on the field, I can’t help but watch the whole clip.”

Hahaha. If you had bothered to do more research into lipreading abilities, you will see it’s mostly myth.

The best lipreaders in English language can only identify the spoken words 30-40% of time unless you are able to hear (via hearing aids or cochlear implants) and identify the voiced and non-voiced letters (b, p; d, t; f, v; etc.) by listening, which increases the comprehension to 60%. Many words in English look similar but sound completely different: man, ban, pan; mother, bother; high school, asshole; six, sex; education, ejaculation; and so forth. They fill in the blanks by looking at the context and figuring out which one fits.

Try this one: “Tell you what, I am going to tell you what.” Clue: a ski town in southwestern Colorado that starts with T.

Same in German with so many words looking so similar but meaning so different, especially the vowels with and without umlaut: Bienen (bees), Beinen (legs); fordern (demand), fördern (encourage); Mama, Papa; achten (to respect or hold in high esteem), ächten (to ostractise somebody); etc. The long compound words can look too much like a sentence and be misunderstood too easily. It’s bad when there are many regional dialects in Germany that don’t follow the Hochdeutsch (Standard German) pronunciations, especially the Swabian German (Stuttgart area and hardest to understand even for hearing Germans) and Schwiizerdütsch (Swiss German).

When the person speaks fast, forget it. When the person has moustache (especially walrus or motorcycle), impossible. When the person doesn’t stand face-to-face when speaking, hard to tell what is said. When the person barely moves their lips (especially the actor, Peter Welles), adios. When the person speaks in technical jargon or uses names, brain freeze. When the person whose English is foreign language speaks, “Sorry? What?”

Reading lips is very exhausting mentally. The lipreaders must concentrate on understanding that they often forget the rest of dialogue. After five to ten minutes, the lipreaders just want to give up and resort to the sign language or written communication. Deaf people hate it when asked if they could read lips. They usually retort by asking, “Can you sign?”

This reminds me of the old joke of mouthing “olive juice” to someone you know.

Yeah, not much of a joke to us deaf people who have to put up with that crap from hearing people on the reg…

Obviously, I wasn’t talking about people doing that to hearing impaired people and can’t imagine anyone thinking that would be appropriate to do to a deaf person as a joke. I’m sorry if assholes have done that to you.

A thousand times yes. Yeah, try saying “elephant shoe” or “olive juice” to someone without voicing the words out loud and see if they can guess what you’re saying.

As a person deaf since birth I all too often have people ask me if I can lipread, a question to which I just shake my head in response, or, worse and more obnoxiously, they’ll tell me about how they knew a deaf person in childhood who could lipread perfectly (yeah, the clue is there in the word “childhood” in that they were kids themselves who were easily fooled.)

The first time I tried watching the film 2001: A Space Odyssey I was in high school and it was on cable TV; when they got to the scene where HAL 9000 lipreads the astronauts talking to each other IN PROFILE I was so disgusted I turned the TV off and cursed Stanley Kubrick even more than Shelley Duvall must have after the 127th take of the baseball bat scene in The Shining.

I can’t dispute statistics or the chances of anyone being successful with lip reading, given reduction and dialects. I am quite good at grasping googly translate gibberish and getting useful English from it, however.

I have met someone who could read lips and speak with astounding ability, who cannot hear.

He learned to speak English using a candle in front of his mouth, and learned to read lips at the same time. This was clearly a very long and intense process. I doubt many people have the opportunity or the inclination to go through that. I would have never known he was deaf if he hadn’t told me, and he only told me because I asked about his accent, which I took to be Australian. This upset him, as he was quite properly proud of his speaking clarity. I can assure you his speech is better than 99% of the population. We had a long conversation, many things he would have struggled with guessing from context, and he only missed things when I turned away. It was my first time in the desert, and I was generally distracted, as you’d expect.

One of the most remarkable people I’ve ever met, and there are a few.

Since then, I’ve taken note of how good many deaf people I meet are at non verbal communication, not talking asl, just creative, from pointing at what they want to repair diagnosis. I try to keep that approach in mind.

It works with feral cats too.

Open the god damn door car!

I’m sorry Dave, I’m afraid I can’t do that.

Although you took very thorough precautions in the car against my hearing you, I could see your lips move….

A peice of tape will stop that easy. Its worked on laptop cameras for years.

i don’t want to talk to my car. i never want to talk to my car. what would we even talk about? this is stupid. Also i don’t want interior cameras in my car for privacy.

“Ford’s New Suspension Breakthrough Rides Better and is Cheaper to Maintain.” That’s a headline car buyers want to read. Instead we essentially get “Ford Spies On You and Sells Your Most Private Thoughts to Meta.”

Honestly Ford have really nailed suspensions. They have lots of different suspensions it’s the only brand that a suspension component has never broken for me.

Dodge is the worst.

How about no.

No, stop this. I don’t ever want to talk to my car anyway and I haven’t met anyone who does.

Voice control is just deeply annoying. I’d much rather use a button that won’t interrupt my music for 30 seconds while it misinterprets what I’m asking.

Somebody please show me the venn diagram of people who drive their vehicle with doors off, and people who like operating it with voice commands.

O O

Yep. In case the comment parser limits the allowable number of spaces, it is “lots”

O O

Hey, here’s a wacky alternative – hard buttons and controls so we don’t have to have constant monitoring by our Ford overlords!

First: Even with all those nifty flowcharts, Ford will get the software logic wrong, and have to recall the system for fixes a few times.

Second: the expression recognition mode uses “inaudible sound waves”, which, while not RF, might drive my dog nuts? (Thank god she doesn’t drive.)

Seriously, this system sounds audibly creepy to me, though I’m not quite sure why.

So, when someone uses the system, the car misreads what the driver’s saying, then crashes, you’ll get a different sort of bad lip-reading video.

Ultimately this will be used to monetize your actions while driving.

Did he just “micro-smile when passing a McDonalds sign? Send him a coupon.

He looks angry..let the insurance company know.

Look, there’s a dog in the back seat. Put a Purina commercial on the dashboard.

Of course, you will have the option to opt out, but you’ll lose your “connected driver” discounts…

Hard pass on any car that has this and similar tech.

Can someone please confirm that there may be regulations for the 2027 model year forward that mandate interior cameras and radar to “monitor” drivers?

That tech, plus digital currency and a digital ID and we can complete an authoritarian regime where you have to “earn” access to use your car, your funds and to unlock access to things with your ID.

I keep saying “talking to control a computer is like walking in place to make your car move”. I’m devastated to realize Ford probably took that as a suggestion.

Someone please take away Ford’s ability to patent things, it’s always horrifying.

Read my lips ” No Thanks”

Read my lips: no new cameras

Meh, we are all freaking out about this, when I think it will never SYNC properly.

I’m going end up Wilfred Brimley level facial hair, or the screens are going to display nothing but cattle pooping and worse when I’m in traffic.

Assuming Ford is still in existence.

The Chinese are eating Ford’s lunch in Europe – and it doesn’t help that Ford no longer has an actual car to sell there (other than the Mustang – whatever) since they’ve discontinued the Ka, Fiesta and Focus – and they don’t expect to have any new cars available for sale for 2 years while they work on rebadging some new Renaults.

https://www.motor1.com/news/791998/ford-swagger-renault-based-cars/

Meanwhile, Ford is losing sales Stateside because they don’t have enough low-end/low trim vehicles to sell.

https://www.motor1.com/news/791950/ford-q1-2026-sales-results-numbers/

With nothing to sell but big expensive SUVs and Trucks in the current market environment – How long can Ford last?

Great, Ford going down the Microsoft Recall dark alley.

Darker still if the cloud AI mentioned is a Palantir product.

This shows up in a car, I’m never buying it.